US President Donald Trump: He’s hacked, but is he fired?

I have no idea how the election will go, and I don’t want to comment on politics here, but I’ve just read a very interesting article about the Trump campaign site being hacked.

US President Donald Trump: He’s hacked, but is he fired?

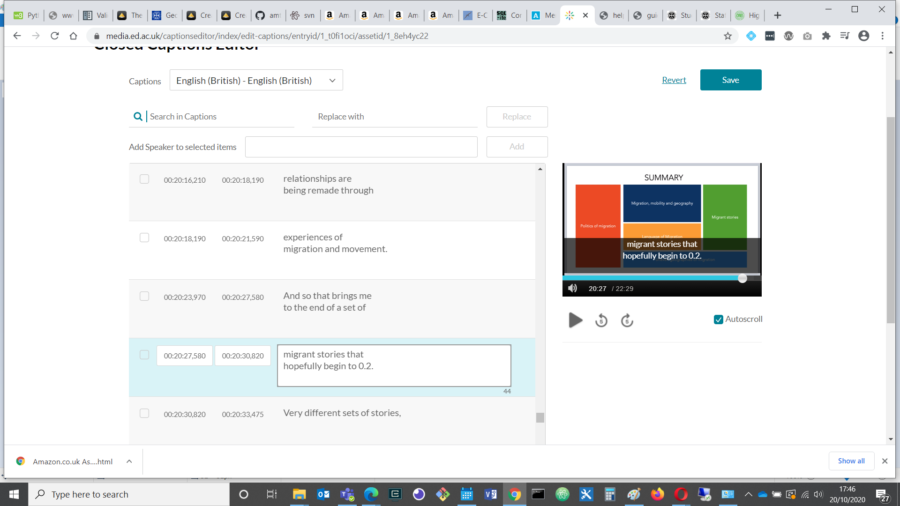

One of the unexpected but nice parts of my job lately has been editing the automated subtitles on some of our teaching videos.

This is a requirement for our course materials, to ensure our videos are more accessible for, for example:

According to research by Ofcom in 2006, 7.5 million people in the UK (18% of the population) used closed captions, and of these, only 1.5 million were deaf or hard of hearing. 80% of those who preferred to watch with subtitles used them for other reasons.

Since we moved to hybrid learning, we have more and more teaching videos to work on, so that is an occasional silver lining to social distancing for me, as subtitling is a nice, quiet and absorbing task, and our videos are often very interesting 🙂

Powered by WordPress & Theme by Anders Norén