“Online surveys have become an increasingly popular method for collecting data quickly and inexpensively. However, one of the major drawbacks of online surveys is the presence of bots. Bots are automated programs that can complete surveys, skewing the data and compromising the integrity of the results. When I ran my own cross-sectional study last year, I very quickly realised that my survey had been infiltrated by bots – so I decided to find out more about them so I would know how to spot them, and stop them, in the future!”

The target population for my survey was mothers with infants aged between 3-9 months old, who were living in the UK. Quite famously, this is a group of people that spends a lot of time on social media, and so I felt confident that this would be a great way to recruit lots of participants very quickly. I put together my survey using Qualtrics, a survey platform that is available for all staff and students in CAHSS to use for free. Then I checked how long it would take to fill out (around 20 minutes as it happens), and finally put it out into the world via Twitter. I went to bed that night feeling confident that the results would roll in quickly and efficiently.

The next morning when I checked my survey, I had received 65 responses in the first 8 hours of the survey going live. Let me tell you, I was excited to see that number, and so I immediately started to look at some of the responses from my participants. But very quickly it became clear to me that something wasn’t right, and I noticed some pretty glaring red flags…

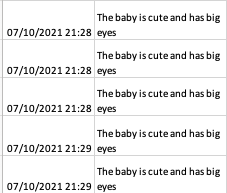

One of my questions (luckily, it turns out) was an open-ended question which asked, “what do you enjoy about your baby?”. I noticed very quickly that some of the answers were a) very repetitive and b) kind of unusual…

🚩Repetitive answers to open ended question:

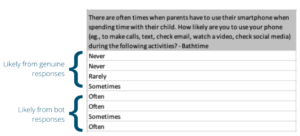

On top of this, IP addresses (IP = Internet Protocol address – a unique digital address for your internet connected devices) were showing up as locations outside of the UK. This might not be a problem by itself – I regularly use a VPN (a Virtual Private Network, that encrypts personal data and masks the users IP address) which makes it look as though my IP address is outwith the UK. However other things were also concerning me, for example multiple surveys had been started at exactly the same time, and answers to questions that we might consider “sensitive” seemed like less than genuine responses…

🚩Responses to “sensitive” questions:

How I Detected the Bots

So, I knew that the bots had found my survey, and were making a laughing stock of me. I needed a way to work out which responses were bots and which were from real people. After a couple of days of reading around, I decided that I would need to write a list of the red flags that I had seen and use triangulation to ensure that I was detecting the bots effectively. Therefore, any response with two or more of the following red flags was identified as a potential bot:

🚩Any survey that had been completed in less than 10 minutes (remembering that it took approximately 20 minutes to complete the survey)

🚩Any responses that had repetitive open-ended responses. Bots may generate similar or identical responses to open-ended questions, which can be a red flag.

🚩If multiple responses were coming from the same IP address or geographical location.

🚩Inconsistent responses to “sensitive” questions. Bots may provide contradictory answers to questions that require personal or sensitive information.

🚩Responses outside of the inclusion criteria should also be flagged. Bots may not adhere to the criteria set for the survey and provide irrelevant or nonsensical responses.

🚩If you are collecting them, email addresses can be another clue to identify bots. If multiple responses are coming from the same email address, it may indicate bot activity.

REMEMBER: any of these red flags on their own may just be an anomaly and would not indicate that the response was from a bot. Once you have decided on your red flags, decide how many red flags per response is enough to identify a bot.

How Can We Stop the Bots?

To stop bots, it is important to implement measures that go beyond just spotting them. And so, knowing what I know now, what would I do differently, I hear you ask. There are a number of ways to ensure that your surveys are a little more “bot proof” and here are some of the measures that I would take in the future:

One way to stop bots is by using a Captcha. Captchas are designed to distinguish between humans and bots by presenting a challenge that is easy for humans to solve but difficult for bots. There is an option in Qualtrics to embed a captcha (look in settings -> security), however, it is important to note that Captchas alone may not be enough to stop bots completely.

Another strategy is to include at least one open-ended question in the survey. Bots typically struggle to provide meaningful responses to open-ended questions, so this can help identify them.

One effective strategy is to repeat a question in the survey. Bots often provide inconsistent responses to the same question, so repeating it can help identify them.

Attention or logic checks can also be used to confuse bots. These checks involve asking questions that require the respondent to pay attention or follow a logical sequence. Bots may struggle to answer these questions correctly.

Hidden questions are another tool to detect bots. These questions are not visible to human respondents but are included in the survey. If a bot answers a hidden question, it can be flagged as suspicious.

Conclusion

In conclusion, bots pose a significant challenge to the integrity of online surveys. However, by implementing strategies to spot and stop bots, researchers can ensure that the data collected is reliable and accurate. Using Captchas, open-ended questions, attention or logic checks, hidden questions, and other techniques can help identify and prevent bot activity. By taking these precautions, online surveys can continue to be a valuable tool for collecting data from a wide range of participants. If you are interested in reading more about survey bots check out these papers: Pozzar et al., 2020; Brainard et al., 2022

And a disclaimer…

I originally prepared this blog as a PowerPoint presentation which I presented as part of the PGR Seminar Series in 2022. Since then, many people have asked me if I had written up my experiences as a blog that they could read as they were creating their own surveys. I have been meaning to do that for a long time, but the thought of writing it out again in words rather than pictures on slides was a little overwhelming.

And yet here it is – in part because I asked a bot to help me. I uploaded my slides to an AI platform which helped me to summarise my slides into a blog, particularly the sections called “How I Detected the Bots” and “How Can We Stop the Bots?”. If I’m honest, the AI wasn’t able to help me write about my own experiences fully, but it did a great job of summarising the main points. So, yeah, bots can be a real nuisance, but there are some helpful ones out there I suppose…