AI is increasingly part of making and distributing news. Organisations such as public service media (PSM) are implementing AI-driven production tools and decision aids that among other things, profile audiences, personalise content, and automate tasks.

In this workshop, we asked:

- What are the pressing professional, technical, ethical, normative and organisational questions raised by AI in journalism?

- How is AI impacting PSM journalistic work (practices, processes, routines, and conventions)?

- If we agree that a more structural transformation of journalism is taking place – what needs researching in relation to PSM?

Four main themes emerged from our discussions:

Context

Legacy news providers must now operate in a data-driven media ecosystem increasingly dominated by big platform players. This poses particular challenges for public service media, which must fulfil specific remits and ascribe to distinctive value frameworks. Developing, deploying, and dealing with AI – which acts to distribute cognition and control between humans and computational systems – causes disruption. It requires reorganisation of resources and practices in order to open up opportunities to augment journalism. It simultaneously risks destabilising established mechanisms for applying values and threatens to undermine existing processes for ensuring responsible journalism. The risks are particularly acute for PSM, which are (usually) funded by taxpayers’ money and held to high standards of accountability and public scrutiny.

For an overview of applications of AI in journalism, please see this briefing note produced by project Co-PI Bronwyn Jones as part of an EPSRC-funded PETRAS project ‘Intelligible Cloud and Edge AI (ICE-AI)’ .

The following themes emerged from our discussions:

-

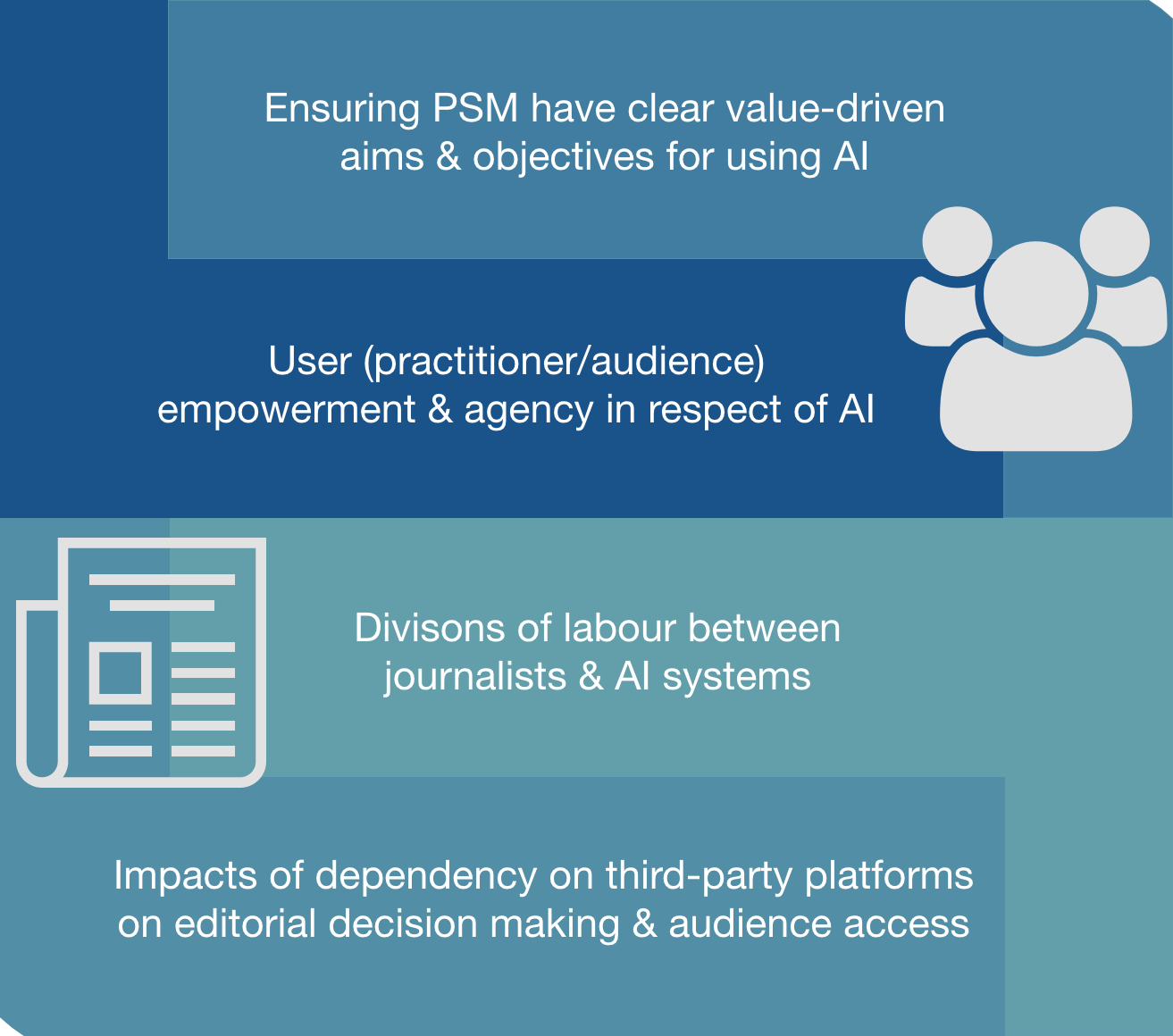

Ensuring PSM have clear value-driven aims & objectives for using AI

-

There is a need for PSM to ask why are they using AI in their systems (e.g. is it about economic efficiency such as speeding up processes, or adding value using AI such as producing different types of news) and create strategies that align to their values and goals.

-

The difficulty of defining and translating PSM stated values into computational form and ensuring they are meaningfully and measurably enacted in the socio-technical infrastructures of PSM is a core challenge.

-

This includes asking how PSM can ensure their personalisation approaches are as unbiased and diverse as possible, what the conflicts/trade-offs might be, and what suitable metrics for optimisation and evaluation should be.

-

This challenge also involves going back to basics and being explicit about what exactly PSM mean when they say universal, impartial, independent, diverse etc. and considering whether these concepts need re-articulating for an AI and data-driven era.

-

It involves normative decisions about what design methods PSM agree with and why – including nudge, behaviour manipulation etc.

-

The need for transparency, explainability, and fostering understanding of AI is particularly important for PSM – but more research is needed around what the purposes and desired outcomes of this are (trust, empowerment/ability to challenge, oversight, accountability etc.) and what the most effective and value-aligned approaches might be.

-

User (practitioner/audience) empowerment & agency in respect of AI

-

Users are often not brought into the conversation when research is done in industry

-

Users of these systems might be practitioners or audience members but all will be impacted by them so more research is needed in collaboration with these stakeholders to understand a) their awareness, perceptions, opinions of the use of AI, b) the impact of these systems, and ensure c) their input into design.

-

This will entail questions of transparency, explainability and intelligibility

-

Personalisation and recommender systems are key research areas in this context linked to PSM obligation to provide value for everybody & value for money.

-

Addressing fundamental questions about what values such as diversity and universality mean (in context) will be crucial, as will devising methods and ways to implement these notions in justifiable ways and in relation to/balance with other PSM values

-

User interface design – as well as wider service design – will be important in ensuring goals (e.g. diversity) are achieved and tools are made usable and intelligible

-

The impacts of dependency on third-party platforms, e.g. on editorial decision making

-

Different forms of dependency exist, including infrastructural (e.g. Amazon web services), exposure (e.g. social media platforms determining who sees what), data (YouTube & Facebook deciding what data & metrics PSM get out of their relationship)

-

Journalists and PSM often do not have the in-house technology to implement AI systems alone and rely on third parties (often large tech companies) to contract with and provide their services/technologies/platforms, which allows for limited agency over the model building/training and restricts visibility/control of the ethics/values behind the technology.

-

More research needed on how use of these technologies is linked to editorial freedom and whether there should be some public oversight of their use.

-

Issues of censorship need exploring, not just via algorithmic curation of what gets seen (e.g. YouTube Facebook) but also the issue of preferential treatment for third parties to be able to remove public service media content from platforms (e.g. PSM original content being removed due to infringement rights claims by parties operating as preferential partners of these platforms.

-

Keeping up with all the third parties relationships required can be resource and time-intensive.

-

The division of labour between journalists & AI systems, including impacts of on work practices, editorial decisions, meaning-making, creativity

-

Need to better understand when/where control is shifting to computational systems and what this means for the public interest

-

Who decides which AI solutions to use and on the basis of what criteria?

-

Recognising that AI is currently a “luxury problem” – an issue for well-resourced PSM and likely to widen the gap between smaller and larger outlets. This raises the question of whether collaborative partnerships, open sourcing or other knowledge and data/model transfer mechanisms could be used to bridge the gaps.

-

What new roles and role conceptualisations are emerging and with what implications for working ethos and practice? E.g. Newsroom tech developers, journalists in research and development, journalist as ‘signal generator’ for systems etc.

-

Must remember not to overlook the more fundamental questions – such as what is journalism? Do we need to rethink journalism in the context of new forms of algorithmic and AI-driven systems? Do professional/editorial and ethical codes need to be rethought?

Next steps:

Building a network

We will continue to bring researchers into conversation with industry to build a network of experts interested in ensuring AI works in the public interest in media and journalism. If you want to be kept in the loop, please contact: Bronwyn.jones@ed.ac.uk

Constructing a research agenda

We will take the insights from this workshop and build a mission-driven research agenda – identifying funding opportunities and scoping out work packages that address these pressing issues of societal significance.

With thanks to our participants

Dr Bronwyn Jones

Postdoctoral Research Associate in Intelligible AI, University of Edinburgh

Dr Kate Wright

Senior Lecturer and Chancellor’s Fellow in Media and Communication, University of Edinburgh

Dr Paolo Cavaliere

Lecturer in Digital Media & IT Law, University of Edinburgh

Anna Rezk

PhD Candidate, University of Edinburgh

Dr Maria Michalis

Reader in Communication Policy, University of Westminster

Nick Mattis

PhD Candidate, VU Amsterdam

Valeria Resendez Gomez

Faculty of Social and Behavioural Sciences, University of Amsterdam

Dr Marijn Sax

Faculty of Law, University of Amsterdam

Alexandre Rouxel

Data Scientist, European Broadcasting Union

Derek Bowler

Head of Social Newsgathering, European Broadcasting Union

David Caswell

Head of BBC News Labs

Dr Rhia Jones

Research Lead, BBC