Optimisations for OpenBLAS enabled on newer hardware

We’ve made a wee change to hopefully improve the performance of the OpenBLAS linear algebra library on our newer Linux hosts, including our newest Slurm compute nodes. Read on to find out more.

What is OpenBLAS?

OpenBLAS is a high performance implementation of BLAS (Basic Linear Algebra Subprograms) – a bunch of useful code routines for doing matrix multiplications and other fun linear algebra stuff. BLAS is used by lots of codes – including (sometimes) Python’s key NumPy package, and the CASTEP molecular dynamics software used by folks in our Institute for Condensed Matter & Complex Systems.

There are different “implementations” of the BLAS codes available. We’ve chosen OpenBLAS as the default BLAS on our own Ubuntu Linux Platform as that is considered to be one of the best performing and most reliable options available. Part of OpenBLAS’s great performance is down to how it includes multiple different “code branches” for key routines that allow it to take advantage of optimisations available in fancier CPUs – in particular vector extensions that allow multiple identical calculations to be executed as a single unit.

What is the problem?

During some recent testing of our brand new AMD EPYC Zen 3 CPUs, I noticed some surprisingly disappointing performance of the CASTEP molecular dynamics code on these nodes as compared to the Zen 2 CPUs on our existing compute nodes. After a bit of digging – in particular running CASTEP through a code profiler so that I could see what it was doing in more detail – I realised that CASTEP was spending a lot of time calling OpenBLAS’s zgemm() matrix multiplication function, and the version of OpenBLAS we have on our Ubuntu 20.04 Platform wasn’t recognising these new Zen 3 CPUs so was falling back on running safer but slower code branches suitable for old CPUs, rather than newer and faster code branches that could take advantage of the AVX2 vector extensions available on these CPUs.

Luckily, OpenBLAS provides a simple mechanism to tell it to use specific CPU optimisations, rather than relying on trying (and possibly failing) to auto-detect the CPU type. This is done via an environment variable called OPENBLAS_CORETYPE, which can be set to a specific value to tell it which CPU family we have, or it can be unset to tell OpenBLAS to try to detect the CPU type itself. (Using environment variables to control how things work is really common in Linux!)

So this provides a nice solution in our case.

What change have we made?

In order to improve OpenBLAS performance on newer CPUs, we are now setting (or clearing) the OPENBLAS_CORETYPE environment variable whenever you log into one of our Ubuntu Linux hosts so that OpenBLAS will do the right thing. In particular:

- On newer hosts – where OpenBLAS isn’t detecting the CPU type correctly – we are now manually setting OPENBLAS_CORETYPE is to an appropriate value for the host’s CPU. (For example, we set this to “Zen” on the AMD EPYC Zen CPUs we have in our phcomputeNNN compute hosts.)

- On older hosts – where OpenBLAS detects the CPU type fine – we explicitly unset OPENBLAS_CORETYPE to let OpenBLAS continue to auto-detect the CPU itself.

We’re also doing this on the compute nodes in our Slurm Compute Cluster so that OPENBLAS_CORETYPE always gets set correctly just before your job starts running.

This should improve the performance of codes that use OpenBLAS when running on newer hosts. These newer hosts include:

- The newest AMD EPYC Zen 3 nodes in our Slurm compute cluster. (I’ve included an example of this below.)

- The CPLab PCs in JCMB 4325D and ROE U3.

- New Linux desktops and workstations with new AMD Ryzen CPUs.

What does this change mean for you?

You probably don’t need to do anything! Just sit back and enjoy (hopefully!) better performance if you run any codes that use OpenBLAS on newer hardware, such as CASTEP and maybe some bits of NumPy.

In the unlikely event that you were already setting the OPENBLAS_CORETYPE variable yourself, then you’ll probably want to stop doing that and let us now manage this for you.

Example case

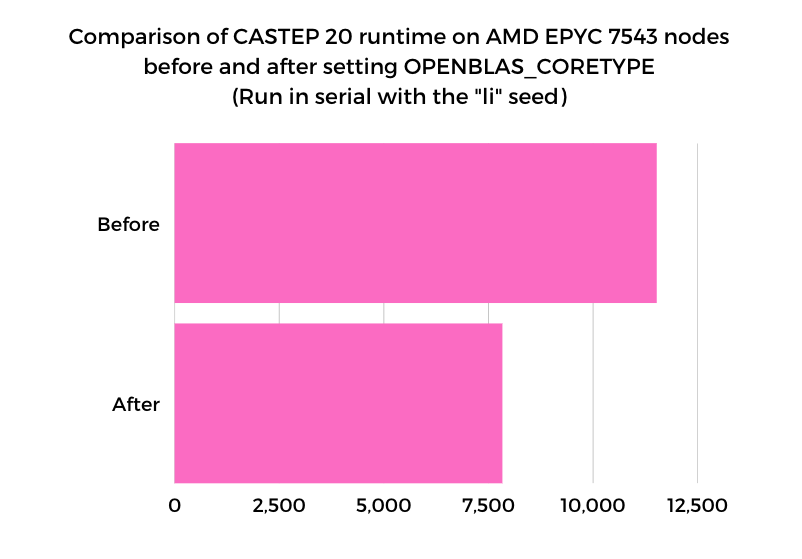

Here’s an example of the effect of this change when running CASTEP 20 on one of our newest AMD EPYC Zen 3 hosts. Here I’ve run CASTEP in serial using the “li” example seed data.

- With OPENBLAS_CORETYPE unset, the run time was 11510s.

- After setting OPENBLAS_CORETYPE=Zen, the run time was dramatically reduced (improved!) to 7823s.

I might post some more details graphs covering parallel performance on various new hosts as well, but that’ll be a story for later…

Help?!

If you have any questions about these changes, then please do ask us!

- You can email the School Helpdesk: sopa-helpdesk@ed.ac.uk

- Alternatively, you can post in the SoPA Research Computing space in Teams.

Comments are closed

Comments to this thread have been closed by the post author or by an administrator.