This post is part of a series on marking remote exams.

We’re using Gradescope to mark 5 of our remote exams at the moment. Here, I’ll outline the process that we’ve used.

Preparation

As with all our exams, we go through a process to prepare a folder of anonymised PDFs, one for each student.

Gradescope provides two different types of assignment:

- Exam / Quiz – where the instructor uploads a batch of scripts, but these all need to have a consistent layout (e.g. when students complete an exam on a pre-printed booklet).

- Homework / Problem Set – where the student can upload a script of any length, and then identify which questions appear on which pages.

Unfortunately neither of these quite fit our situation – we have a set of variable length scripts, but we need to upload them (since we wanted students to have a consistent submission experience across exams, whether or not we used Gradescope to mark them).

Fortunately my colleague Colin Rundel is a wizard with R and he was able to semi-automate the process of uploading each script individually to the “Homework / Problem Set” assignment type. All our marking is done anonymously, so we’re only using the students’ Exam Number in the Gradescope class list, and for each student Colin’s R script uploads their PDF submission.

Zoning

Once the scripts are uploaded, we still need to identify which questions are on which pages – a process I’ve taken to calling “zoning” since that’s the terminology used in RM Assessor (one of the other tools we’ve been trying).

To do this, we’ve employed several PhD students, who would normally have been helping out with various marking jobs for our 1st/2nd year courses (but those exams were cancelled for this diet).

These PhD students were set up as TAs in the course, and tasked with marking up which questions were on each page, just like the students would normally do in Gradescope. This is surprisingly a difficult workflow in Gradescope, requiring multiple clicks to move between scripts (and there is no summary of which scripts have been “zoned”). To get round this, Colin prepared a spreadsheet with direct links to each script in Gradescope, and the zoners used this to keep track of which ones they had completed (and note any issues). I wrote some very brief instructions on the process (PDF) – this included a short video clip of me demonstrating how to do it, but I’ve redacted that here because it shows student work.

Marking

The process in Gradescope is based on using rubrics (see https://www.gradescope.com/get_started). These can work with either positive or negative marking; we have been using the default of negative marking in the exams so far, which is different to our usual practice but seem to work best in this system. Essentially for each question, you develop a set of common errors and the associated number of marks to take off. That way you can then tag responses with any errors that occur, giving more useful feedback about what went wrong.

Each course has worked a little differently, but the basic idea is for the Course Organiser to develop a rubric and make sure it works on the first 10-15 scripts.

- Sometimes the CO has developed the rubric first, then went through the first 10-15 scripts to check it made sense and make any adjustments

- Other times, the CO has just started marking the first 10-15 scripts, and used that process to develop the rubric.

Other markers can then be assigned a question (or group of question parts) each, and they go through applying the rubric. We’ve asked that they flag any issues with the rubric to the CO rather than editing it directly themselves (e.g. to add a new item for an error that doesn’t appear already, or if it seems that the mark deduction for one item is too harsh given the number of students making the error).

A nice feature is that you can adjust the marks associated to rubric items, and this is applied to all previously marked scripts in the same way.

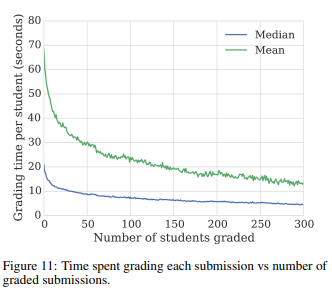

Feedback from markers so far has been very positive. They have found the system intuitive to use, and commented that being able to move quickly between all attempts at a particular question has meant that they can mark much more quickly than on paper. Gradescope have also done some analysis of data from many courses and found that markers tend to get quicker at marking as they work through the submissions:

Moderating

Once marking is completed, the CO can look through the marking to check for any issues. The two main ways of doing this are:

- checking through a sample (10-20 scripts) of each question, to make sure the rubric is being applied consistently (and following up with further checking if there are any issues).

- checking whole scripts, particularly any which are failing or near the borderline.

Gradescope provides the facility to download a spreadsheet showing the mark breakdown for each script, and also a PDF copy of the script showing which rubric items were selected for each question part. We’ll be able to make those available for the moderation and Exam Board process.

Conclusions

Gradescope is clearly a powerful tool for marking, and I think we will need something like this if we are to do significant amounts of on-screen marking in future.

However it does come with some issues – we had to work around the fact that it is not designed for the way we needed to use it. For long term use it would make sense to have the students tag up which questions appear on which pages, but that would require integration with our VLE and would add a further layer to the submission process for students (and another tool/system to learn to use). I was also concerned to see news that Gradescope crashed during an exam for a large class in Canada, and there are obvious issues about outsourcing such a sensitive function.

One Reply to “Marking exams using Gradescope”