My role

In this project, I was mainly responsible for the design and composition of the sound part, including recording sound, designing sound effects, composing music, and also exploring the techniques of sound-painting interaction, helping the group to realise a live performance using M5Stick sensors and Max to follow the actors’ movements in real-time to generate semi-automated music. This was also my first attempt at composing music that matched the atmospheric settings of the three stages of the project through midi, designed sound effects. I also designed the audio system to fit the performance area, ensuring that the audience could hear the abundant sound details during the performance live.

During the performance I was involved as the sound performer, controlling all the sound output effects, adjusting the balance between the multiple sound elements and mixing the sound for the scene in real time.

Technical Approach

For the recording, I recorded the sounds of many common household objects, such as floating curtains, torn fabric, squashed balloons, twisted threads and so on. These subtle sounds in the family life are often amplified in a depressingly intimate setting, and I used these sounds, either by equalising, layering, or adding effects such as delayed reverb, to create a soundscape that could be used alongside whispered and chanted vocals to build the character’s inner atmosphere.

When composing the three parts, I focus on using some hollow synth sounds as a backdrop and distorted piano as a melody to tell the story. In writing the second part of the dance, some powerful drums and crisp percussion build a disorienting and tense musical base.

Considering the human-sound interaction part of the performance, I changed part of the sound input of the first part of the performance to come from the performer in the scene, some teaching and reprimanding words became part of the sound performance, and these sounds affected the image, thus distorting it.

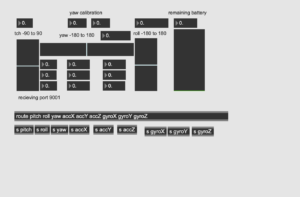

In the second part of the performance, many components of the music are derived from the generation of the performer’s movements, as she touches the strings around her to drive the bells to ring, using the M5Stick to capture her hand movements and compose the music in real time. The M5Stick is connected to Max under the same LAN using Arduino, and by receiving real-time yaw, roll, pitch, etc. data from the M5, scale them and trigger changing timbres, pitches and rhythms.

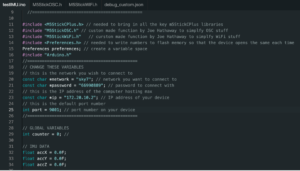

Wifi Setup

M5Stick and Max Connection

Reflections

The techniques I utilised were limited in sound composition, as it was my first time composing, and I needed to gain experience, making it challenging to write melodies with layers of evolving feeling. And when using Max for semi-algorithmic compositions, not adding more sound elements that fit the intense action of the characters, many objects still needed to be mastered. In the second stage of sound and picture interaction exploration, the attempt to use Max as a sender of output volume data caused the parameters in Processing to change, thus making the effect of visual particle dispersion not possible. In the future, I will focus more on learning techniques and exploring more possibilities for sound interaction.

Documents

Part 1 Mixing https://drive.google.com/file/d/1mFLVemaLbHSIs_EXReBWH9cNFqvpOlIB/view?usp=share_link

Part 2 Composing https://drive.google.com/file/d/1hJ__2rSRBj7yTwlH3oCR8tdIHqadInnu/view?usp=share_link

Part 2 Max Patchers https://drive.google.com/file/d/1xCIy2S-RgqypvWlqYt3FvaF5v551XoO7/view?usp=share_link

Part 3 Mixing https://drive.google.com/file/d/1UBbC0qdSQUK-sPXm9zSnHK_XTKBExe_W/view?usp=share_link