Link of PDF File:

Link of Performance Video:

Link of Related Files:

https://uoe-my.sharepoint.com/personal/s1934638_ed_ac_uk/_layouts/15/onedrive.aspx?id=%2Fpersonal%2Fs1934638%5Fed%5Fac%5Fuk%2FDocuments%2FPerformance%20File&view=0

dmsp-performance24

https://uoe-my.sharepoint.com/personal/s1934638_ed_ac_uk/_layouts/15/onedrive.aspx?id=%2Fpersonal%2Fs1934638%5Fed%5Fac%5Fuk%2FDocuments%2FPerformance%20File&view=0

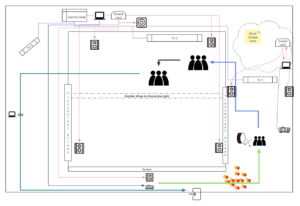

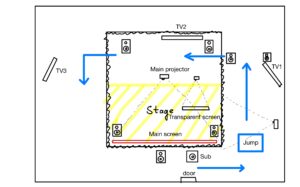

The overall audio system adhered to the initial design, primarily divided into two zones controlled by two computers. The only change was the relocation of the subwoofer from the front to the back of the stage. This adjustment was primarily made because the actors needed to move beneath the screen at the front, and placing the sound equipment there could potentially cause safety issues.

During the pre-show stage setup, our initial idea was to hang the speakers above the screen. However, due to safety regulations and time constraints, this could not be implemented, leading us to opt for a ground-based setup instead.

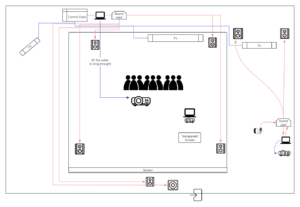

In the initial plan, the corridor scene included an audio-interactive system controlled by Geophone inputs, which was intended to trigger changes in the projector images. However, for unknown reasons, this setup consumed a substantial amount of processing power, causing instability in the operation of the two computers, despite it being just a simple video mixer for live feeds.

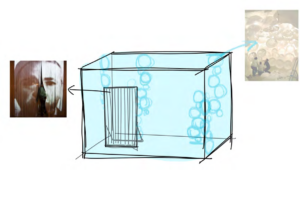

Consequently, we had to make a last-minute substitution with sound-activated light strips controlled by Arduino sensors. These strips were hung on bubble wrap that separated the audience area from the performance space, adding an interactive element to the stage setup.

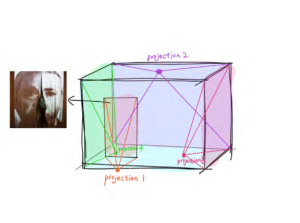

The video system consisted of three projectors and two televisions. The corridor projection used an NEC HD projector, employing a semi-transparent shower curtain as the projection screen, which allowed for viewing from both sides.

The main screen utilized an Optoma Short Throw Projector to minimize the distance between the projector and the screen. This particular feature enabled us to place the projector behind the screen, projecting the image in reverse, and thus freeing up ample space for performances on the main stage.

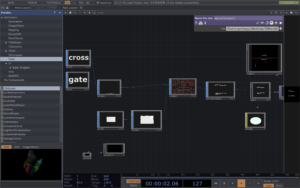

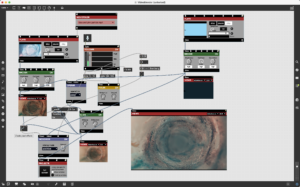

The projection on the left side of the stage employed an ASUS S1 Mobile Projector to project onto a transparent screen from the inside, with some of the light passing through the screen to create dynamic visual effects above the stage. The projection content consisted of variable graphics triggered by sound, created using TouchDesigner.

Implementing this setup, however, presented several challenges, the most significant being the length of the HDMI cables. Our system design intended for a Mac at the main control console to manage the television displays, the main stage sound system, and the main stage projection, ensuring synchronization of sound and visuals. This required HDMI cables of approximately 8 meters in length to avoid crossing performance areas. Unfortunately, on the day of the performance, the longer cables had already been borrowed from Bookit, leading to a lengthy search that eventually procured slightly shorter cables than needed. Safety concerns with the cables also arose during rehearsals; ultimately, we secured all cables to the floor with gaff tape to mitigate any hazards.

Google Drive 5.1 Surround Version Link: https://drive.google.com/file/d/1no6QsiduSEDFbP8MoLaNKxk5yf9KsKQ9/view?usp=share_link

The production of the audio segment commenced relatively late, initiated only after the total duration of the video was firmly established. The production adhered to previously established standards, utilizing a 5.1 surround sound format. Additionally, during rehearsals, we encountered challenges in visually guiding the audience to view specific scenes along the designed path. Consequently, we opted to use sound as the primary means to direct the audience’s attention.

The diagram illustrates the audience’s walking path during the final performance. Initially, the audience proceeds through the green path where they first pass through suspended balloons, then see TV1 directly ahead and a projector to the left, displaying consistent content: AI-generated ocean animations and text animations designed using TouchDesigner. The ambient sound is stereo music. Concurrently, Xianni plays the hand drum in sync with the music, beginning from the middle of the piece and continues guiding the audience forward as the music nears its end. At this point, TV1 is moved aside to form a pathway, leading the audience into the main stage area (indicated by the blue route). Here, the large screen on TV2 shows a video clip of a carp in the air with a voice-over from the carp, “I see, I jumped over the dragon gate,” emitted from the Ls and Rs speakers. The projection screen and speakers at the front of the stage remain silent to focus the audience’s attention on the back of the stage. As the final words are spoken, the sound shifts from the back to the front; simultaneously, the television turns off and the main projector lights up, prompting the audience to turn and enjoy the performance directly in front of them. Thus, the guidance through sound is completed.

With only about a week remaining for audio production, the majority of the sound was edited and designed using sounds from personal and commercial sound libraries to save time. The project’s structure consisted of four main components: vocals, sound effects, ambiance, and music. Vocals included narration and demonic whispers, which were processed through pitch shifting, electronification, and overloading to achieve the desired effects. The ambiance was created using multitrack stereo with sound image variations to produce a surround sound track.

Sound effects were produced in a manner typical of film and television sound production: markers were initially set along the timeline, and the tracks were gradually filled to complement the music and convey emotions. We also experimented with various abstract processing techniques, fully utilizing the advantages of the surround sound track to create rapid, continuous sound image changes, reducing auditory fatigue for the audience. As for the music mix, since the music was initially in stereo format, we added a surround sound reverb send to compensate for the lack of rear surround sound.

Current rent list:

During this week, we conducted our first system connectivity test in the Atrium. Owing to time constraints, we separately tested the interactive installations and the surround sound system components. The surround sound setup consists of five Genelec speakers and a subwoofer, connected to an RME UCXII sound card to receive audio from the computer.

Given the complexity of routing surround sound audio files directly from the computer, during actual performances, the main stage’s surround sound audio and projection videos will be played through a Protools output sound card and on the first expansion screen. Meanwhile, the television visuals will be displayed using QuickTime on a second expansion screen, ensuring there is no interference between the two systems.

Initially, we added spatial sound variations to a segment of pink noise. Through testers listening from the audience’s position, we achieved a relatively balanced effect by real-time adjustments of each speaker’s input level on the virtual mixing console. Subsequently, we played a completed surround sound segment, which resulted in a satisfactory performance outcome.

Following that, we tested the projection system and the Max interactive installation. Our initial idea was to create rock props fitting the scene, inviting audience members to jump around in the setting. We planned to capture the vibrations with a geophone and transform the visuals accordingly. However, in practice, we found the geophone to be extremely sensitive; it could detect strong sound pressure levels even from a distance or as soon as an audience member entered the door, making it challenging to set an activation threshold for the device.

Therefore, we intend to connect the system to percussion instruments, transforming the corridor scene into an interactive performance. When performers strike the drums, it will cause changes in the visuals. Additionally, we plan to optimize the interactive system to make it appear more diverse.

1.Use ChatGPT and Runway, two tools, to create unique images and videos. Start with ChatGPT to generate an image.

This process begins by defining the type of image you want. Whether it’s a landscape, object, or abstract concept, you need to provide a detailed description, including the scene, objects, colors, and style. Once you have this concept, you can tell ChatGPT, and it will generate a unique image based on your description.

After the image is generated, the next step is to use this image as the basis for video production. This is where the Runway tool comes in. Runway is a powerful machine learning platform, specifically for image and video editing. First, you need to register an account and log in on Runway. Then, select an AI model that suits your project. Runway offers a variety of choices, each with different functions, and use various tools and settings to transform and enhance the image, such as adjusting colors, adding dynamic effects, or converting the image into a series of animated videos.

2.Add transitions in Final Cut

3.Creating font effects in Touch Designer:

• Create a text node, modify the font content. Use a merge node to combine different texts. Adjust the text’s position on the Y-axis. Increase the font thickness.

• Create and modify a grid:

Use a grid node to create a grid. Adjust the grid’s length, width, and height to make it rectangular. Add a noise node, connect it to the grid to create dynamic effects.

• Attach fonts to the grid:

Use attribute create to add normals and surface textures. Set up geometry, light, camera, and render nodes for rendering.

• Colors and materials:

Add materials and colors (such as pink) to the fonts. Optimize effects, including adjusting background color and resolution.

• Adding special effects:

Use nodes like feedback, blur, level, etc., to add visual effects. Combine different effects with a composite node.

• Creating a box background:

Create a box node and use geometry and render nodes to make a box background. Add dynamic effects with feedback, transfer, label, and composite nodes. Refine effects by adjusting parameters like scale and opacity.

• Final adjustments:

Adjust the position and angle of the lights. Adjust colors and transparency to ensure a cohesive overall effect. Add a bloom effect to increase brightness and vividness. Finally, add a breathing effect to make the box dynamically change.

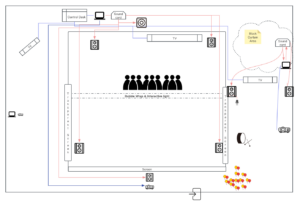

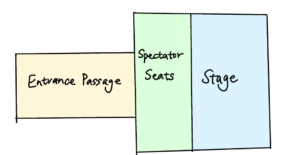

On the afternoon of this Monday, the team members conducted their first rehearsal in Alison House, which did not include an actual scene. During the rehearsal, our primary focus was on refining the length information and presentation format of each scene, to set a rough timeline for sound production. Below is the preliminary stage equipment layout diagram discussed.

Participants will first pass through a meticulously arranged corridor, which will feature an interactive system that blends with the scene. This includes one to two passively triggered devices and one actively triggered device, with the specific triggering methods still under experimentation. One of the triggering devices implemented so far is a contact microphone. By affixing it to side walls, floors, or surfaces like whiteboards, we can effectively prevent the device from being triggered by people talking. For instance, it could be placed beside the floor, which is arranged to resemble a stream that the audience needs to cross. When the audience steps on it, the device will trigger, causing changes in the Max video. The reference for the Max project is as follows.

The basic principle involves using Video Blender to merge two videos in different ways, while utilizing the volume levels of the audio input in Max to trigger operations in Blender. When the audio input reaches a certain level, it outputs a “bang,” which triggers a switch. This allows for the automatic switching of the videos used in the blending process.

After passing through the corridor, the audience will enter the main stage. Firstly, there will be a pseudo-dynamic moving image on the TV behind the main screen, narrated in reverse along with a voiceover. The provisional script is as follows: “I remember crossing the Dragon Gate. I remember seeing the light. I remember hearing their voices. I remember, I’m almost unable to recall those times.”

Then, the audience will turn their attention to the performance on the main stage. The main stage is equipped with a 5.1 surround sound system and two screens. The front transparent screen is used to display some foreground elements, extending the stage’s visual field. After the performance, a pre-made closing video will be played on TV3 to guide the audience to leave.

We will prepare 7 parts of music for thie digital media project, six of them correspond to our story lines and scripts, as well as there will be a independent song after the sixth act.The use of Musical Instruments and the arrangement of harmonies will be associated with this Chinese mythological story and traditional Chinese culture.

It will illustrate the theme of our whole story——There are reflections on the busy, stressful lives of the modern day,as well as the thinking about the general blind competition.The song will be performed live after the storyline.

So far I’ve finished the music for the first act and the sicth act. The first part consists of piano, bamboo flute and chimes,something worth mentioning is,we have a special performance-related design for the dramatic expression of the main character——We want to use bamboo flutes to express the actions of the protagonist,and play bamboo flute live.

At the sixth part, emotions will be pushed to a climax, in this section we have prepared a live performance of the drum.

DMSP 2024 Performance – Submission 1

Links to PDF: Jump_Carp_Jump_DMSP_Performance_Submission1

We aim to reflect on the rat race phenomenon in this involuted generation, so as to rebel against this culture of involution.

Nowadays, we face escalating peer pressures in crowded environments. However, sometimes, individuals get ahead routinely in a pointless pursuit and engage in aimless competition with the masses.

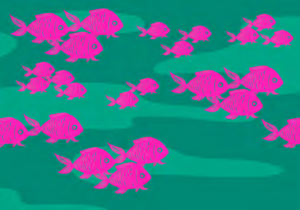

We adapted the story of the carp, revealing our sadness of being trapped in the rat race.

We would set an intimate theatre for the audience, mainly using projectors, lighting and sounds to show the performance (set dressing/ sound design/interactive props).

Research and experiments will be the central practice surrounding the project:

– script writing

– storyboard and visual design

– props making and animation output

– projector setting and lighting tests, location decisions

– music demo

– sound environment

– interactive sound installation

Making screens out of fabric

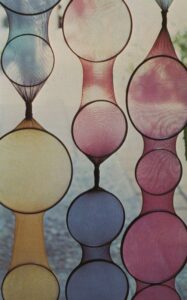

Ballons imitate crouding fish / Fish lantern exhibition at Shanghai

It is a theatre with a screen made of transparent strip curtains, named Hamlet. Directed by Thomas Ostermeier.

We would like to apply this idea in our performance to introduce audiences to the more significant stage site behind, letting them find the joy of exploration in this.

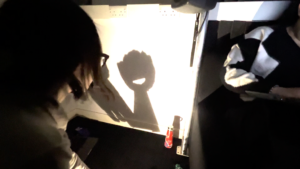

The installation Christian Boltanski by Marcos Rabello, shows the flickering light cast onto the wall and the shadows of little figures. We intend to cast audiences’ shadows in this way, transform them from spectatorship to being part of the performance and enhance participation. However, we need to address and experiment with the issue of audience members misunderstanding that they are blocking the projection and hiding from the lights. Our goal is to find effective ways to communicate to the audience that they are invited to participate.

A test of the projector inspired by Christian’s work

Explore the possibility of creating a soft screen by projector and curtain for audience members to walk through.

Use different coloured lights to highlight the main characters. Or consider, shadow show can also be a form.

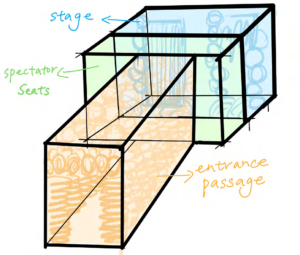

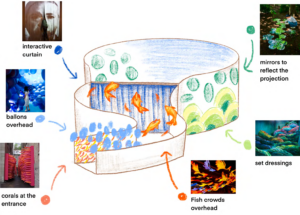

We aimed for an immersive stage experience, divided into three sections: the entry tunnel (orange), performance area (blue), and viewing area (green).

The entrance allows audiences to pass through a narrow, decorated passageway resembling the ocean environment of carp. The narrow space is decorated with balloons and coral to simulate the environment of carp in the ocean. We aim to let the audience understand the survival of the carp in the water through this crowded environment.

Upon entering the viewing area, projections in the performance area and on the sides will enhance immersion. Yet, details regarding projection equipment usage and room setup are still pending.

We propose to make an additional foreground curtain on the left side of the central performance area. This versatile curtain serves as both a projection surface and an entry/exit point for actors, enhancing stage hierarchy and facilitating actor movement.

Next, we will visit the designated rooms for field measurements to make necessary adjustments and refine the design for accuracy based on the room’s dimensions.

We made modifications to the setup based on the data from the field measurements (right image).

As you can see, the room will be divided into a small square film theatre in the centre, with a ring of encircling passages around it. Red arrows show the viewing route, which will then be guided by props.

The image below is the previous set dressing idea, which references and illustrations are provided to illustrate the prototype.

To illustrate the conceptual design of our set, I have drawn a schematic storyboard to follow an audience member through the experience of our performance from a third-person perspective.

1.1) There will also be an interactive screen in the passway, adding engagement.

1) The entrance passway is decorated with balloons to simulate the fish crowding.

2) Fish lamps hanging overhead, introduce the context of their living conditions.

3) Follow the projection with crowding moving forward, walking through the curtain to see the next scene behind.

4) Follow the projection with crowding moving forward, walking through the curtain to see the next scene behind.

5) Follow the projection with crowding moving forward, walking through the curtain to see the next scene behind.

6) All lights go out, leaving only bottom lighting behind the audience. Their shadows merge with the projected aquarium.

The projecton background mainly explains the environment in which the story develops. The projecton is mainly divided into three scenes: the river environment that creates the life of the carp, the rough river surface when the carp leaps the Dragon Gate, and the carp leaping through the empty door to enter the Heavenly Court.

As a traditonal Chinese story, the design of the background also incorporates Chinese style, hoping to reflect the cultural background of the story.

Projection Scene Demo 1, Rough river, painted with colour blocks, generated with AI

During our testing of the place andprojector, we discovered that the colors did not match as anticipated, so that the mage did not appear as expected.

After many attempts, we found that the range and distance of projection equipment we currently use do not match the images in our works.

Response:

We would investigate the next steps, practice the application of the projector, adjust the scale of the image and be flexible in the choice of projection equipment according to practice.

Scene One: crowding living conditions.

Particle effect: using particle technology to simulate the crowding in the river.

With Touch Designer, we could create streams of particles that look like fishes, which allows the audience to control the fish swimming directions by waving their arms.

Scene Three: toward the illusory dragon gate.

Particle effect: the particle gantry may change suddenly, releasing light particles that appear to attract but push the carp toward the trap.

Visual cue: as the carp get captured, the particles on stage shift from an orderly flow to an eruption of chaos, symbolising betrayal and pain.

Scene Five: fall into the aquarium

Particle effect: in the end, the particles in the scene can gradually disappear until the stage is completely darkened, leaving the audience to ponder.

This approach carries the risk of audio-video desynchronization, so our priority is to use a single software for production

For this part , we’ve created a demo of interactive sound effects using ProTools’ 5.1 surround sound format. This demo ranges from normal whispers with a slight reverb to distorted, heavily reverberated sounds resembling a dragon’s rumble, with added surround sound effects.

Given the space constraints in the Scene 1 corridor where the actual setup will take place, it might not be feasible to use surround sound speakers. To address this, we can render the surround sound content in binaural format, which is then outputted to headphones.

This approach allows for an immersive audio experience using a headphone amplifier, supporting multiple audience members simultaneously. This method ensures that the spatial quality of the sound is maintained, providing a realistic and engaging auditory experience that complements the visual and interactive elements of the scene.

https://drive.google.com/file/d/1eaQXOGu59cZgbWMIUCUkM2u7VTatm0as/view?usp=share_link (5.1 dialogue sound design

“Beyond the gate, freedom calls.”

“Leap into your destiny, find your strength.”

“Embrace the unknown, for it holds your liberation.”

“In the leap, lies the path to transformation.”

“Dare the impossible, become the unimaginable.”

“Seek the gate, embrace your true form.”

“The gate beckons, promising a new dawn.”

“Cross the threshold, claim your power.”

“In the leap, your chains break.”

“The gate is the key, unlock your potential.”

Protools Session Dialogue Design

Sound Environment Demo Audio Link: https://drive.google.com/file/d/1fp9mix5ti7y1oirtB_dtwCjSIWte-D0t/view?usp=share_link

Link of demo:

To furthermore, this original demo maintains plenty of Chinese traditional elements, for example Chinese pentatonic scales, as well as a part of Chinese traditional music instruments. The entire composition is structured into three distinct parts:

To begin with, the first section delineates the environment, spotlighting the protagonist (the carp) navigating its existence within the polluted waters, some Chinese traditional instruments like Instruments‘XUN’, evoked a somber and murky ambiance.

Additionally, the second segment delves into the character’s actions, emphasizing its leap over the dragon gate and the ensuing series of endeavors.

Next, the third part crescendos into the climax of the piece.Instruments such as the Suona, Pipa, and Bamboo Flute build upon the atmosphere layer by layer, culminating in a climactic finale. The unique technique of the Suona unveils the resolution of the story.

To begin with, try out and solve problems with the practcality of all technologies, then adjust and improve them, including equipment, sound effects, music, lightng control and image display.

Secondly,to enhance project completon, team members created props, sound effects, lightng, and music, all while staying in touch, sharing ideas, and coordinatng the tmelines to ensure each component is finished on schedule.

Finally,stay vigilant for any project issues as they arise, and address them promptly to enhance workflow efficiency.

In this meeting, Andrew and our team first established the overall storyline and the themes involved. The theme is negative, featuring horror elements, and it involves reflections on pollution and personal growth. After discussion, we decided to focus the narrative design around the protagonist Yue’s psychology. In stage and interactive design, we will incorporate considerations of ocean pollution, allowing the audience to empathize while experiencing the story.

Regarding how to attract the audience, we are considering the use of interactive and immersive sound designs to create music, ambient sounds, and special effects. These special effects include sounds for stage narration and interactive experiences. For example, in the first scene, we plan to use Touch Designer or Max to create an interactive installation. When the audience interacts with the TV, real-time generated images and sounds will appear on it. Additionally, we are thinking of creating props that can be interacted with or remotely controlled, such as rotating fish lamps or hanging balloons, to enhance the audience’s experience.

This document represents a formal record of a meeting jointly submitted by our team.

During this meeting, team members primarily engaged in discussions about various topics and the feasibility of their implementation. The discussions were reoriented based on the remarks made during the lecture. The most critical task identified was to settle on a singular narrative and to progress according to our conceptualized ideas, allowing for creativity within a reasonable scope and enhancing the use of visual and auditory technology.

In our previous discussions, we identified three distinct themes: marine environmental protection, dark fairy tales, and mental health issues. Among these, a complete performance story had already been developed for the dark fairy tale theme. However, considering the difficulty in stage design and prop production, as well as the performance skills of our group members, we decided against choosing a story with human characters as protagonists. Instead, we opted to integrate story elements into animal characters. By combining this approach with the previously selected theme of marine environment, we ultimately decided on a narrative featuring fish as the main characters.

The backdrop of our story is the Chinese fairy tale “The Carp Leaps over the Dragon Gate,” in which the carp, after enduring numerous hardships, leaps over the Dragon Gate and transforms into a real dragon. However, we aimed to adapt this into a dark fairy tale, deepening the thematic essence of the story to enhance audience empathy. By integrating elements of marine environment and mental health, we have developed the following script:

Play Script (15-20 minutes)

Scene One: The Polluted River

Scene Two: The Harsh Journey

Scene Three: The Illusory Dragon Gate

Scene Four: Aquarium Imprisonment

Scene Five: The Final Awakening and Departure

After watching the television series ‘Chernobyl’, my understanding of nuclear contamination has deepened significantly. However, there remains a widespread lack of awareness among many globally about the dual nature of nuclear energy as both a clean energy source and a producer of formidable pollution.

In our group’s initial discussion, we recognized the diverse strengths of each member and drafted a preliminary plan for the theme. To facilitate scene setting, prop creation, and atmosphere design, we unanimously agreed on creating an underwater scenario, allowing for greater creative expression in the visual direction. This decision naturally led to the incorporation of themes related to nuclear waste water discharge. As a topic, it doesn’t have to dominate the drama but can be integrated as one of its elements. For instance, it could depict marine life struggling to return home, hindered in the last leg of their journey by nuclear wastewater, or malformed creatures born in polluted seas attempting to escape.

The strength of this theme lies in its expressiveness, particularly in sound design. The natural terror evoked by the sound of a Geiger counter can be amplified through surround sound systems, offering the audience a more profound experience. Additionally, the use of fluorescent green props in a dark space can effectively immerse the audience in the environment.

Horror scenes inherently excel in their expression through sound and lighting design. The employment of a continuous low-frequency heartbeat, sudden noises, audio spatial changes, and processed human voices can adeptly sculpt the ambiance of a performance.

Similarly, lighting control offers a multitude of options, such as sudden flickering, cool-toned lighting, fluorescent-colored scenes, and the utilization of shadows and silhouettes. These elements synergistically contribute to the creation of a profoundly immersive and unsettling atmosphere characteristic of horror settings.

Traditional performance: A musical, dramatic, or other entertainment presented before an audience. Digital media performance includes virtual reality and robot performance work, telematic performances in which remote locations are linked in real time, Webcams, and online drama communities, and considers the “extratemporal” illusion created by some technological theater works.

Dixon, Steve. 2007. Digital Performance: A History of New Media in Theater, Dance, Performance Art, and Installation. Cambridge, MA: The MIT Press. https://doi.org/10.7551/mitpress/2429.001.0001.

An artistic performance can be crafted through the use of one or two backdrop curtains in combination with projected imagery. This can be complemented by handcrafted elements such as balloons, fish-shaped lanterns, or models to enhance the foreground of the stage. Simultaneously, controlled lighting or projectors from above can be synchronized with real-time audio and video animations to create an immersive atmosphere.

Possible Max Visual-Audio Solution

An example of stage arrangement consisting of a projector and foreground models

We have considered a multi-faceted approach for our stage setup, involving the use of multiple curtains, projectors, and meticulously crafted foreground models. Each scene, along with its corresponding foreground elements, is individually created and controlled by team members. The performance involves the synchronization of lighting, pre-recorded videos, sound effects, and live manipulation of foreground models, immersing the audience in various scenes and emotions. However, one drawback of this approach lies in the complexity of system setup, including sound arrangement, which can potentially lead to technical issues and synchronization challenges during live performances.

Another option I want to explore involves the use of one or two fixed screens for video projections. In this setup, some foreground models remain static while others can be interchanged during scene transitions or utilized as props by the performers. This approach offers the advantage of greater system control, reduced risk of technical glitches, and simplified synchronization of audio and visuals. It ensures a smoother and more reliable live performance experience, as sound and visuals do not interfere with each other.

These two approaches can be integrated with the previously mentioned audience-experience elements of “suspended balloons” and the pairing of light projectors, to enhance the overall effect of the live performance. Additionally, the music can be pre-arranged to include sections for expressive live instrumental performances, or to allocate certain rhythms for audience interaction, making the experience more engaging and dynamic.