Touch designer real-time line

In order to enrich the main stage, I plan to add a secondary screen on the left side of the stage, and use the unique visual effect created by touch designer on the secondary screen to assist the main stage performance. The original plan was to set up a camera in front of the main stage, which could display the movement and outline of the dancer’s body on the screen when the dancer appeared in the dance. However, during the test, I found that the light on the main stage was not bright enough, and TouchDesigner could not recognize the characters on the stage, so we planned to set up LED lights on both sides of the stage. The light was heavy and we were worried about the safety risks of not hanging well, so I ended up using this interactive part for the main stage with the devil whispering part and the fish struggling as they jumped the gantry.

Since this idea was planned at a later stage, it was too late to prepare the Kinect camera. Instead, I sought ZigSim, an app on the mobile phone, which can use the mobile phone as a sensor and peripheral camera to transmit video signals from the mobile phone to TouchDesigner through the network.

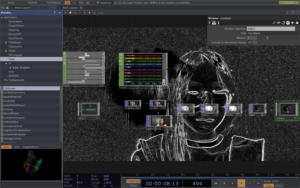

The cache is used inTouchDesigner to save frames in the video and to obtain motion tracks through difference detection. Next, the motion contours are enhanced by adjusting brightness, contrast, and edge detection. To eliminate blemishes in the image, use a high filter size for blurring. Adjusting the minimum value of the Chroma Key can reduce the number of black pixels in the image and optimize the Settings for different environments.